The psychology of questions: how to design surveys to get results

Every survey question is a psychological experiment. The words you choose, the order you place them in, the options you provide—each decision shapes how respondents think, feel, and answer. Most survey designers focus on what they want to learn. The best ones also think about how the human mind processes the question.

Contents

Understanding the psychology behind how people respond to questions doesn't require a degree in cognitive science. It requires recognizing a handful of well-documented mental patterns and designing around them. When you do, you get data that's more honest, more complete, and more useful than what a naively designed survey would produce.

This guide covers the psychology of questions—the principles that influence survey responses—and how to apply them to get results you can trust.

How framing changes everything

The same question, framed differently, produces different answers. This isn't a flaw in human reasoning—it's how cognition works. People don't evaluate questions in a vacuum. They evaluate them relative to the context the question creates.

Psychologists Amos Tversky and Daniel Kahneman demonstrated this with their famous "Asian disease" experiment. When a public health program was described as saving 200 out of 600 people, most participants chose it. When the same outcome was reframed as 400 people dying, preferences reversed, even though the math was identical.

In surveys, framing shows up everywhere. "How satisfied are you with our service?" primes for positive response. "What problems have you experienced with our service?" primes for negative. Neither is neutral—each frame directs attention toward a specific part of the experience.

The practical takeaway: be deliberate about your frames. If you want a balanced picture, ask the question from both angles and compare. If you want to measure satisfaction, use a true scale (very dissatisfied to very satisfied) rather than a question that assumes a starting position.

The anchoring effect

When people encounter a number before answering a question, that number influences their response, even when it's completely irrelevant. This is anchoring, and it's one of the most robust findings in behavioral research.

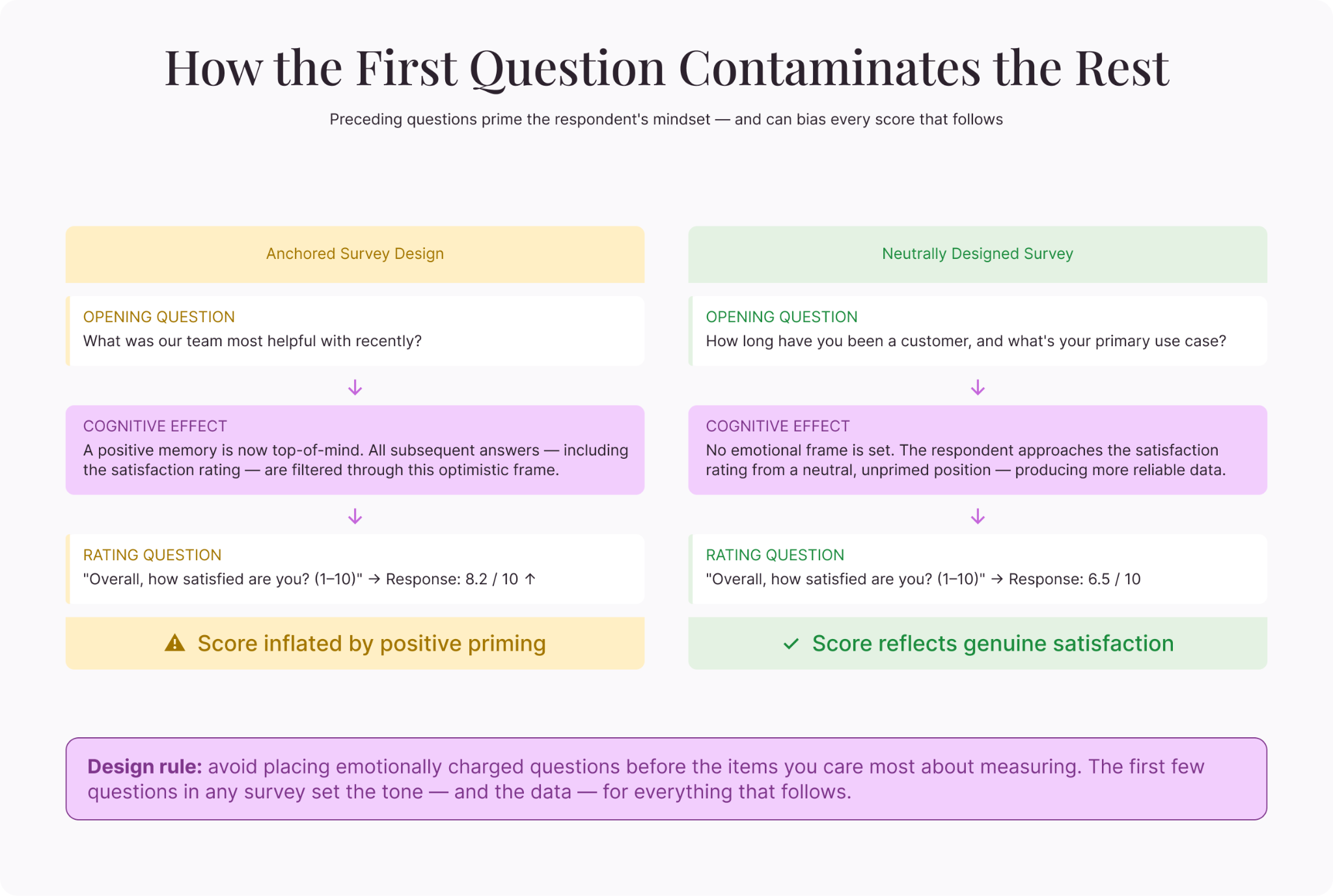

In surveys, anchoring is triggered by preceding questions, example responses, and scale endpoints. If the first question on a customer satisfaction survey asks about a particularly positive experience, the anchor shifts upward—respondents rate subsequent questions more favorably because the positive experience is now their reference point.

Scale endpoints also anchor. A 1-10 scale implicitly suggests that five is average, while a 1-100 scale shifts "average" to 50. The midpoint of whatever scale you choose becomes the default reference, and respondents cluster around it.

Design implication: Be consistent with your scales throughout the survey. Avoid placing emotionally charged questions immediately before the items you care most about measuring. And be aware that the first few questions in any survey set the tone for everything that follows.

Cognitive load and satisficing

Every survey question requires mental effort. The respondent has to read the question, understand it, search their memory for relevant information, form a judgment, and map that judgment onto the response options. That's a lot of cognitive work, and it happens for every single question.

When the cognitive load gets too high—long surveys, complex questions, confusing scales—respondents switch from "optimizing" (giving the best possible answer) to "satisficing" (giving the first acceptable answer). They read less carefully. They default to middle options. They agree with whatever statement is presented. They rush.

Satisficing produces data that looks complete but isn't meaningful. The numbers are there, but they reflect fatigue rather than genuine opinion.

Design implication: Shorter surveys produce better data than longer ones. Simple questions outperform complex ones. If a question requires more than 10 seconds of thought, simplify it or break it into parts. Every question should earn its place by being essential to your research objective.

Social desirability and self-presentation

People don't just answer questions—they perform for an imagined audience. Even in anonymous surveys, respondents shape their answers to present a version of themselves they're comfortable with. They overreport socially approved behaviors (volunteering, exercising, voting) and underreport stigmatized ones (substance use, prejudice, financial struggles).

This social desirability bias is strongest when the topic is sensitive and when the respondent believes—even subconsciously—that their answer could be traced back to them.

Design implication: For sensitive topics, maximize and clearly communicate anonymity. Use indirect question formats (third-person framing, behavioral estimates instead of self-reports). And normalize the "undesirable" behavior in the question framing: "Many people find it difficult to exercise regularly. How many times did you exercise last week?" gives permission for an honest answer.

Question order effects

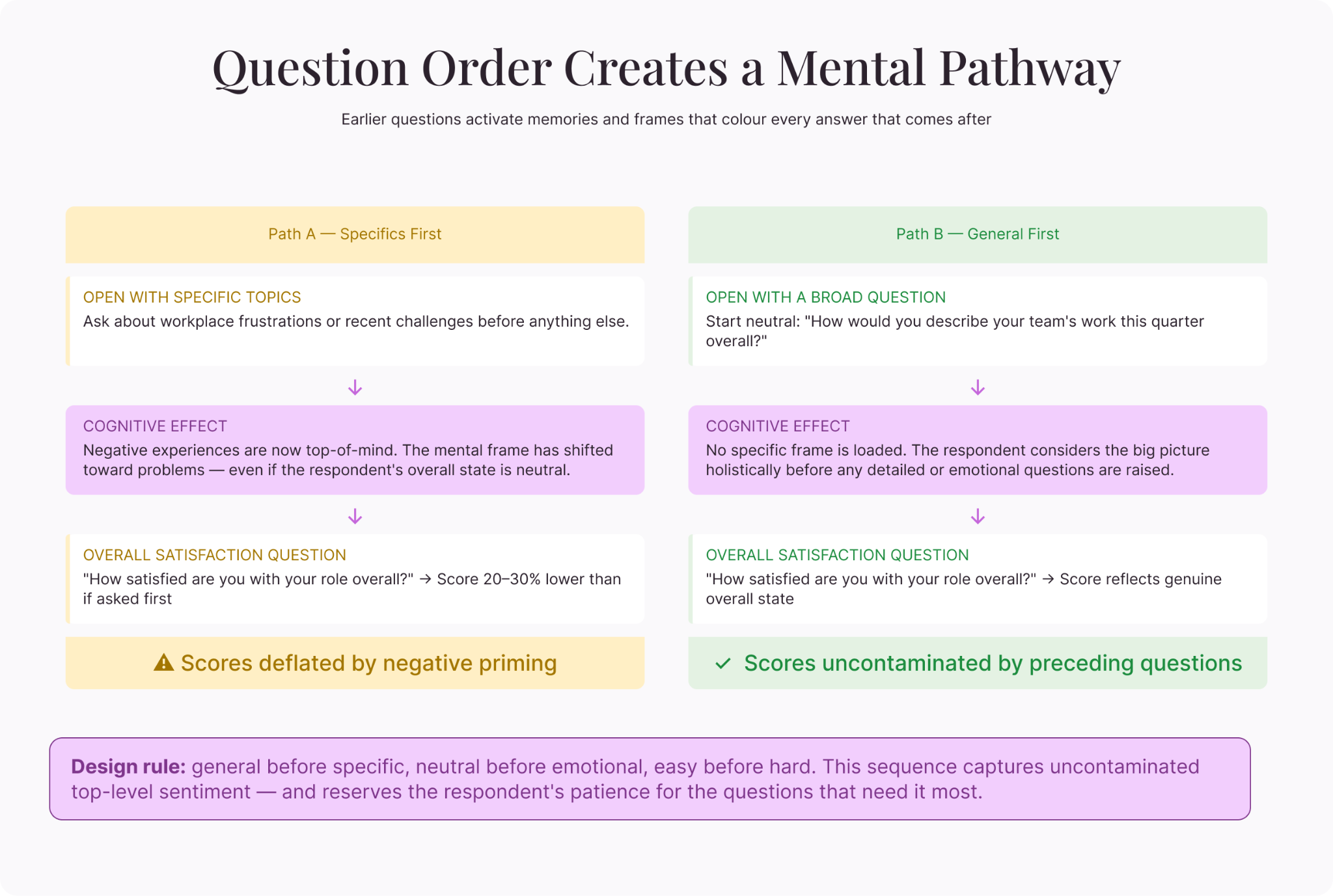

The sequence of questions creates a narrative in the respondent's mind. Earlier questions activate specific memories, emotions, and frameworks that color how subsequent questions are interpreted.

Researchers call this "priming." If you ask about workplace frustrations before asking about overall job satisfaction, satisfaction scores will be lower than if you'd asked about satisfaction first. This is because the frustration questions brought negative experiences to the front of the respondent's mind.

The reverse is also true. Asking about accomplishments before satisfaction inflates satisfaction scores.

Design implication: Lead with broad, general questions before diving into specifics. This captures uncontaminated top-level sentiment. Then move to specific dimensions. Save sensitive or emotionally charged questions for later, after the respondent is engaged and the survey has established a neutral tone.

The paradox of choice in response options

More options seem like they'd produce more precise data. But research on choice overload suggests the opposite. When faced with too many options, people become indecisive, frustrated, or default to the easiest selection rather than the most accurate one.

A five-point scale and a seven-point scale produce similar statistical reliability. A 10-point scale offers marginal improvement in precision but increases cognitive load. Beyond 10 points, the gains evaporate and the costs (confusion, satisfaction) dominate.

The same applies to multiple-choice lists. A question with 15 options is harder to process than one with six, and respondents are more likely to select from the top of the list (primacy effect) rather than evaluating every option equally.

Design implication: Keep scales to five or seven points. Limit multiple-choice options to seven or fewer when possible. If you genuinely need a long list, randomize the order so positional bias doesn't systematically favor certain options.

The power of open-ended questions (used sparingly)

Closed-ended questions are efficient but constrained. They measure within the boundaries you set. Open-ended questions let respondents break those boundaries, introducing ideas, language, and perspectives you didn't anticipate.

Psychologically, open-ended questions engage a different mode of thinking. Instead of recognizing and selecting (a relatively passive process), respondents must recall and construct (an active process). The data is richer but the effort is higher. This means diminishing returns if you overuse them.

Design implication: Use one to three open-ended questions per survey, placed strategically. After a key rating question ("You rated us three out of five—what could we do better?") or at the end as a catch-all. These produce the most actionable qualitative insights without burning out respondents.

The peak-end rule and survey experience

The peak-end rule states that people judge an experience primarily by how it felt at its most intense point (the peak) and at the end, rather than by the average of every moment. This has direct implications for survey design.

If a survey ends with a frustrating, confusing, or overly personal question, respondents will remember the entire survey more negatively—even if the first 90% was fine. If it ends on a positive note (a simple question, a thank-you message, a moment of feeling heard), the overall memory improves.

The peak matters too. A single question that feels intrusive, confusing, or disrespectful can color the respondent's perception of the entire survey—and their willingness to participate next time. Identify which questions carry the highest emotional charge and handle them with extra care: clear framing, prominent anonymity reminders, and easy-to-use response formats.

Design implication: End your survey with a light, easy question or an open-ended "anything else you'd like to share." Avoid placing your most sensitive or complex question last. And review the survey for moments of peak difficulty or discomfort—smooth those peaks wherever possible.

The commitment and consistency effect

Once people commit to a position early in a survey, they tend to answer subsequent questions in a way that's consistent with that initial commitment. If the first question asks "Do you value sustainability?" and the respondent says yes, they're psychologically primed to give sustainability-friendly answers throughout the rest of the survey—even on questions where their true behavior might contradict that stated value.

This effect means early questions don't just gather data—they set the frame for everything that follows. A survey about workplace culture that opens with "Do you believe teamwork is important?" will get different results than one that opens with "How would you describe the communication dynamics on your team?" The first version establishes a norm. The second gathers information.

Design implication: Avoid asking value-laden or aspirational questions early in the survey. Lead with behavioral or observational questions ("How many team meetings did you attend last week?") rather than identity-affirming ones ("Are you a team player?"). This reduces the consistency effect and produces more honest responses throughout.

Putting psychology to work

Understanding the psychology of questions doesn't mean manipulating respondents. It means respecting how human minds actually process information and removing obstacles that prevent honest, thoughtful answers.

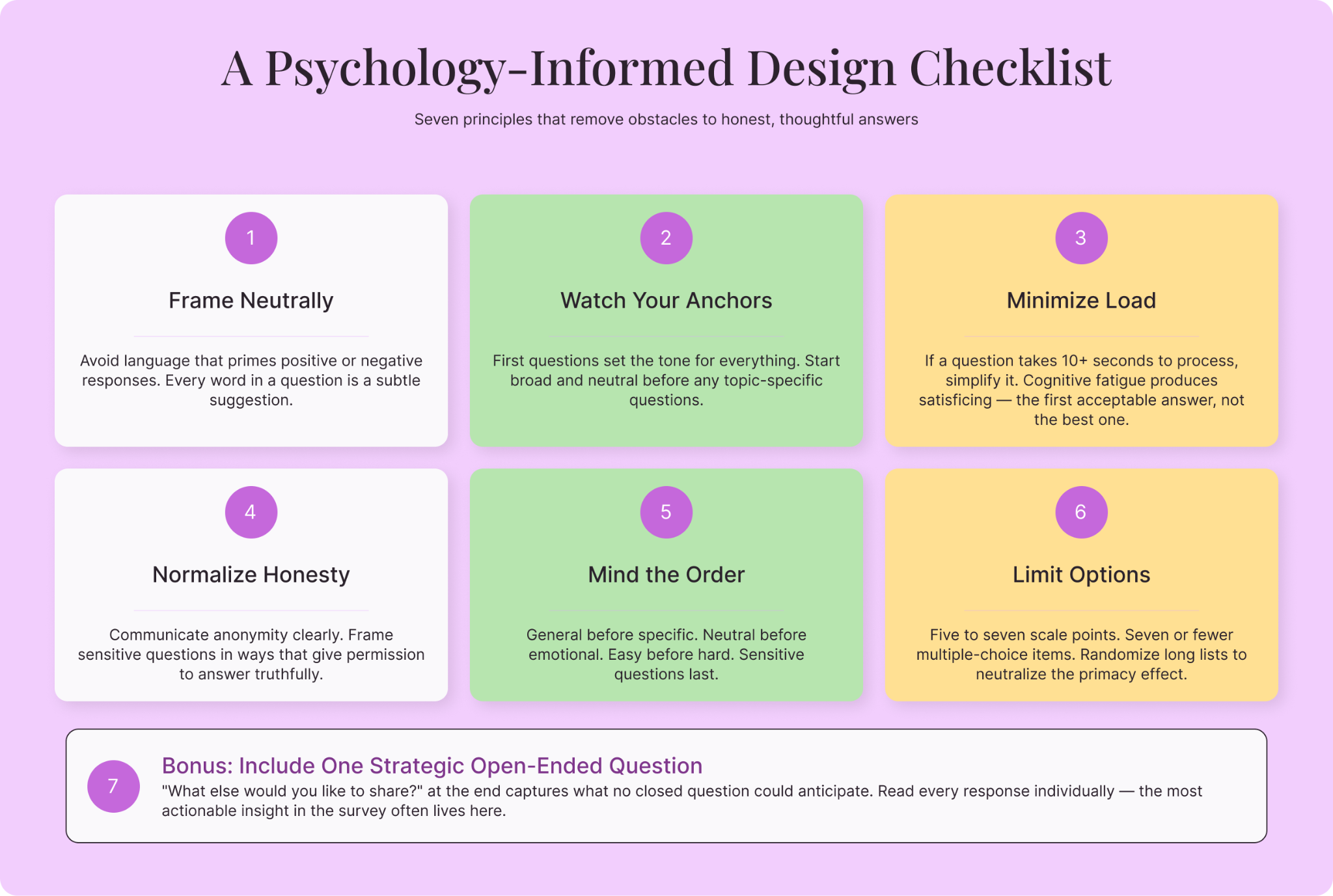

Here's a condensed checklist for your next survey:

- Frame questions neutrally. Avoid language that primes for positive or negative responses.

- Watch your anchors. The first questions set the tone. Start broad and neutral.

- Minimize cognitive load. Keep it short, simple, and clear. If a question requires a paragraph of explanation, it's too complex.

- Normalize honesty. For sensitive topics, use anonymous formats and frame questions that give permission to answer truthfully.

- Mind the order. General before specific. Neutral before emotional. Easy before hard.

- Limit options. Five to seven scale points. Seven or fewer multiple-choice items. Randomize when lists are long.

- Include strategic open-ended questions. One or two, placed where the qualitative depth will be most valuable.

The difference between a survey that produces reliable data and one that produces noise often comes down to these principles. The questions themselves might look similar on the surface. But underneath, the psychologically informed version is working with the respondent's mind rather than against it—and the data reflects the difference.

Liked that? Check these out:

.webp)

Opinions and Expertise

The rise of adaptive, momentum-driven journeys (and the fall of the static funnel)

Human behavior isn't a neat funnel—it's a constellation of scattered but connected moments. Adaptive, momentum-driven journeys act on these moments in real time to deliver a customer experience that naturally drives conversions. That's why they're replacing the familiar but dead static funnel.

Read more

Opinions and Expertise

Customer flows, not funnels: Why marketers are rethinking how customers move

Marketing funnels assume customers move in straight lines—but they don't. Customer flows use automated workflows to build momentum, with one action triggering the next. See how Typeform Contacts & Automations helps you meet customers where they are, automatically.

Read more

.webp)