What is data gathering? techniques and best practices

Learn what data gathering is, explore key quantitative, qualitative, and digital techniques, and discover best practices for reliable results.

What is data gathering? Techniques and best practices

Every decision your business makes rests on information. The quality of that information—where it comes from, how you collect it, and whether you can trust it—determines whether those decisions move you forward or send you sideways. That's why data gathering matters so much, and why getting it right is worth the effort.

Whether you're a marketer trying to understand what your customers actually want, a researcher building a case for a new initiative, or a business owner weighing your next move, the data gathering technique you choose shapes everything that follows. Collect the wrong data, or collect it the wrong way, and even the best analysis won't save you.

This guide breaks down what data gathering really means, walks through the most widely used techniques, and shares the best practices that separate useful data from noise.

What data gathering actually means

Data gathering is the systematic process of collecting information from various sources to answer specific questions or solve particular problems. It's the foundation of research, strategy, and informed decision-making across every industry.

But here's where many people trip up: data gathering isn't the same as data analysis. Gathering is about collection—getting the raw material. Analysis is what you do with it afterward. Conflating the two leads to sloppy collection habits, because people rush to the "interesting" part without building a solid base.

A good data gathering technique has three qualities. It's repeatable, meaning someone else could follow the same steps and get comparable results. It's relevant, meaning the data you collect actually addresses the question you're trying to answer. And it's reliable, meaning the method minimizes errors and biases that could distort your findings.

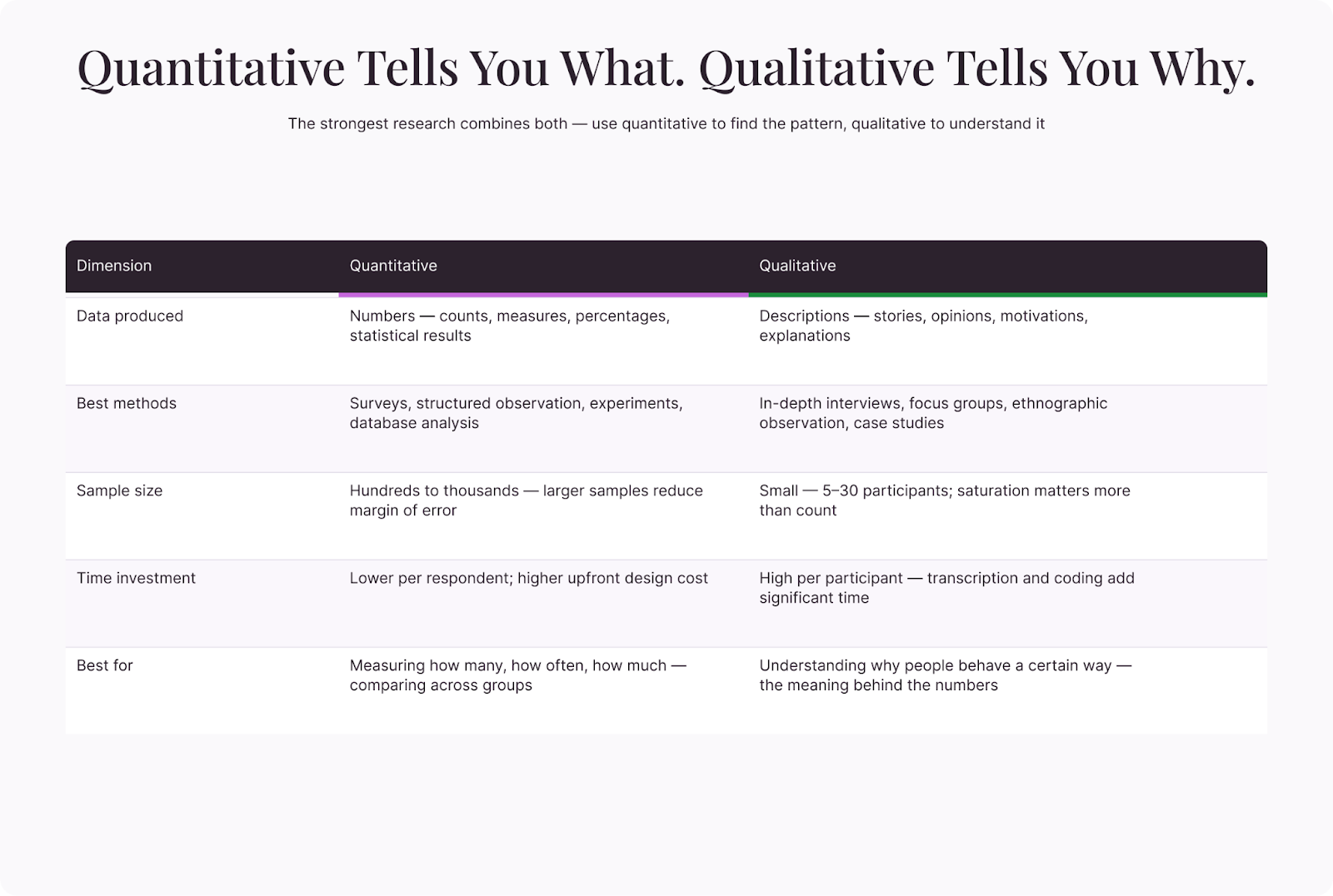

The technique you choose depends on several factors: what you're trying to learn, who you're learning it from, how much time and budget you have, and whether you need numbers (quantitative data) or narratives (qualitative data). Most real-world projects use a combination of both.

Primary vs. secondary data gathering

Before diving into specific techniques, it helps to understand the two broad categories of data gathering.

Primary data gathering

Primary data is information you collect yourself, firsthand, for a specific purpose. You design the study, choose the participants, and control the method. The advantage is precision—you get exactly the data you need. The trade-off is cost and time.

Common primary methods include surveys, interviews, focus groups, observations, and experiments. If you're launching a new product and want to know how your target audience feels about it, primary data gathering is usually the way to go.

Secondary data gathering

Secondary data is information that someone else has already collected. Government databases, published research, industry reports, and publicly available datasets all fall into this category. Secondary data is faster and cheaper to obtain, but it may not fit your exact needs, and you have less control over how it was gathered.

The strongest research combines both. Secondary data helps you understand the landscape and identify gaps, while primary data fills those gaps with targeted, specific insights.

Quantitative data gathering techniques

Quantitative techniques produce numerical data—things you can count, measure, and analyze statistically. They're particularly useful when you need to identify patterns across a large group or test a specific hypothesis.

Surveys and questionnaires

Surveys are the workhorse of quantitative data gathering. They let you reach a large number of people quickly, and the structured format makes it easy to compare responses across your sample.

A well-designed survey uses a mix of question types: multiple choice for quick categorization, rating scales for measuring intensity, and ranking questions for understanding priorities. The key is clarity—every question should have one interpretation and one interpretation only.

Online surveys have made this technique more accessible than ever. You can distribute them through email, social media, or embed them directly on a website. Response rates vary widely depending on your audience and incentive structure, but even modest response rates can yield statistically meaningful results if your sample is large enough.

That said, surveys aren't automatic. The questions you ask, the order you present them in, and the response options you provide all influence the quality of the data you collect. Leading questions push respondents toward specific answers. Ambiguous wording creates noise. And surveys that are too long cause people to disengage partway through, leaving you with incomplete data from fatigued participants. Testing your survey with a small group before full distribution catches most of these issues.

Structured observations

Observation means watching and recording behavior as it happens, without interfering. In quantitative observation, you use a predefined framework—counting how many times a specific behavior occurs, measuring duration, or categorizing actions into predetermined buckets.

Retail stores use this technique to track foot traffic patterns. Usability researchers watch how people navigate a website and record where they click, pause, or abandon a task. The strength of structured observation is that it captures what people actually do, which sometimes differs sharply from what they say they do.

Experiments

Experiments are the most rigorous approach for establishing cause and effect. You manipulate one variable (the independent variable), hold everything else constant, and measure the outcome (the dependent variable). If the outcome changes, you can attribute it to your manipulation with a high degree of confidence.

A/B testing in marketing is a familiar example. You show one version of a landing page to half your audience and a different version to the other half, then measure which one drives more signups. The controlled setup lets you isolate the impact of specific changes.

Experiments require careful planning. You need a large enough sample to detect meaningful differences, a genuine control group, and a clear hypothesis going in. Poorly designed experiments produce ambiguous results that don't help anyone.

The biggest strength of experiments is internal validity—you can be confident about what caused the result. The biggest weakness is external validity—controlled environments don't always mirror real-world conditions. An A/B test on a landing page tells you which version performed better in the test, but factors like seasonality, traffic source, and user intent can all change the outcome when you apply the winning version more broadly. Plan for follow-up measurement after implementation, not just during the experiment itself.

Existing databases and records

Sometimes the data you need already exists inside your organization. Sales records, customer support logs, website analytics, and transaction histories are rich sources of quantitative data. Mining these records can reveal trends you didn't know were there—seasonal patterns in purchasing, common complaints that spike after product updates, or customer segments that behave differently from each other.

The challenge with existing records is data quality. Records collected for operational purposes weren't designed with your research question in mind. Fields may be incomplete, definitions may have changed over time, and the data might contain errors nobody bothered to fix because they didn't affect day-to-day operations.

Qualitative data gathering techniques

Qualitative techniques produce descriptive, non-numerical data—stories, opinions, motivations, and explanations. They're indispensable when you need to understand why people behave a certain way, not just what they do.

In-depth interviews

One-on-one interviews let you explore a topic in depth with a single participant. Unlike surveys, interviews are flexible—you can follow up on unexpected answers, ask for examples, and adjust your line of questioning based on what you're hearing.

Semi-structured interviews work well for most purposes. You prepare a list of key questions in advance but leave room to explore tangents when they seem promising. This balance gives you consistency across interviews while still capturing the unexpected insights that make qualitative research valuable.

The downsides are time and scale. Interviews are labor-intensive to conduct and even more labor-intensive to analyze. A typical qualitative study might include 15-30 interviews, which means hours of transcription and coding before you can identify themes.

Focus groups

Focus groups bring together 6-10 participants for a guided discussion on a specific topic. The group dynamic is the point—participants react to each other's ideas, build on them, challenge them, and sometimes reveal perspectives they wouldn't have articulated on their own.

A skilled moderator is essential. Without one, dominant personalities can hijack the conversation while quieter participants stay silent. The moderator's job is to keep the discussion focused, draw out every voice, and prevent groupthink from steering the results.

Focus groups are particularly useful in the early stages of research, when you're still figuring out the right questions to ask. They generate a wide range of perspectives quickly and can surface issues or opportunities you hadn't considered.

One practical consideration: focus groups work best when participants share enough common ground to have a productive conversation but enough diversity to generate different viewpoints. A group of marketing managers from different industries will spark richer discussion than a group from the same company, where hierarchy and office politics can suppress honest opinions. Plan your recruitment with this balance in mind.

Ethnographic observation

Where structured observation counts and categorizes, ethnographic observation immerses the researcher in the environment they're studying. The goal is to understand behavior in context—not just what happens, but the social, cultural, and situational factors that shape it.

An ethnographic researcher studying workplace communication might spend weeks embedded in an office, observing meetings, hallway conversations, and email patterns. The depth of understanding this produces is unmatched, but so is the time commitment.

This technique is most common in academic and UX research. If you're designing a product for a specific community, ethnographic research helps you understand their world on their terms rather than imposing your assumptions.

Case studies

Case studies take a deep dive into a single instance—one company, one project, one event—and examine it from multiple angles. They combine interviews, document analysis, and observation to build a comprehensive picture.

The strength of case studies is richness. They capture complexity that surveys and experiments can't. The limitation is generalizability—you can't confidently apply findings from one case to every situation. But as a source of hypotheses and practical lessons, case studies are hard to beat.

Digital data gathering techniques

The internet has created entirely new ways to collect data, many of which blend quantitative and qualitative approaches.

Web analytics

Every visit to your website generates data: which pages people view, how long they stay, where they click, what device they're using, and where they came from. Web analytics platforms organize this into dashboards and reports that reveal how people interact with your digital presence.

The volume of data can be overwhelming. The best practice is to start with specific questions. For instance, "Where do visitors drop off in our signup flow?" is more useful than "Tell me everything about our traffic." Let those questions guide which metrics you focus on.

Social media listening

Social media platforms are a massive, continuously updated source of public opinion data. Monitoring tools let you track mentions of specific topics, brands, or keywords and analyze the sentiment, volume, and themes of those conversations.

This data is unstructured and messy, which makes it harder to analyze than survey responses. But it has a key advantage: it's unsolicited. People are sharing their genuine opinions without being prompted by a researcher, which can reveal attitudes that surveys miss.

Online behavior tracking

Heatmaps, session recordings, and click tracking show you exactly how people interact with a digital interface. You can see where they hover, what they scroll past, and where they get confused. This data is invaluable for UX research and conversion optimization.

Privacy considerations are important here. Always be transparent about what you're tracking and ensure you're complying with relevant data protection regulations. Data gathered without consent isn't just unethical—in many jurisdictions, it's illegal.

Sensor and Internet of Things data

Connected devices generate a continuous stream of data—temperature readings, location pings, usage patterns, environmental measurements, and equipment performance metrics. In manufacturing, sensors on production lines track throughput and flag anomalies before they become breakdowns. In agriculture, soil moisture sensors inform irrigation decisions. In logistics, GPS tracking optimizes delivery routes.

This type of data gathering is passive and continuous, which means it captures patterns that periodic surveys or observations would miss. The challenge is volume. Sensor networks can generate enormous datasets that require automated processing and storage infrastructure. Define clearly which metrics matter before you start collecting, or you'll drown in data that's technically available but practically useless.

Best practices for effective data gathering

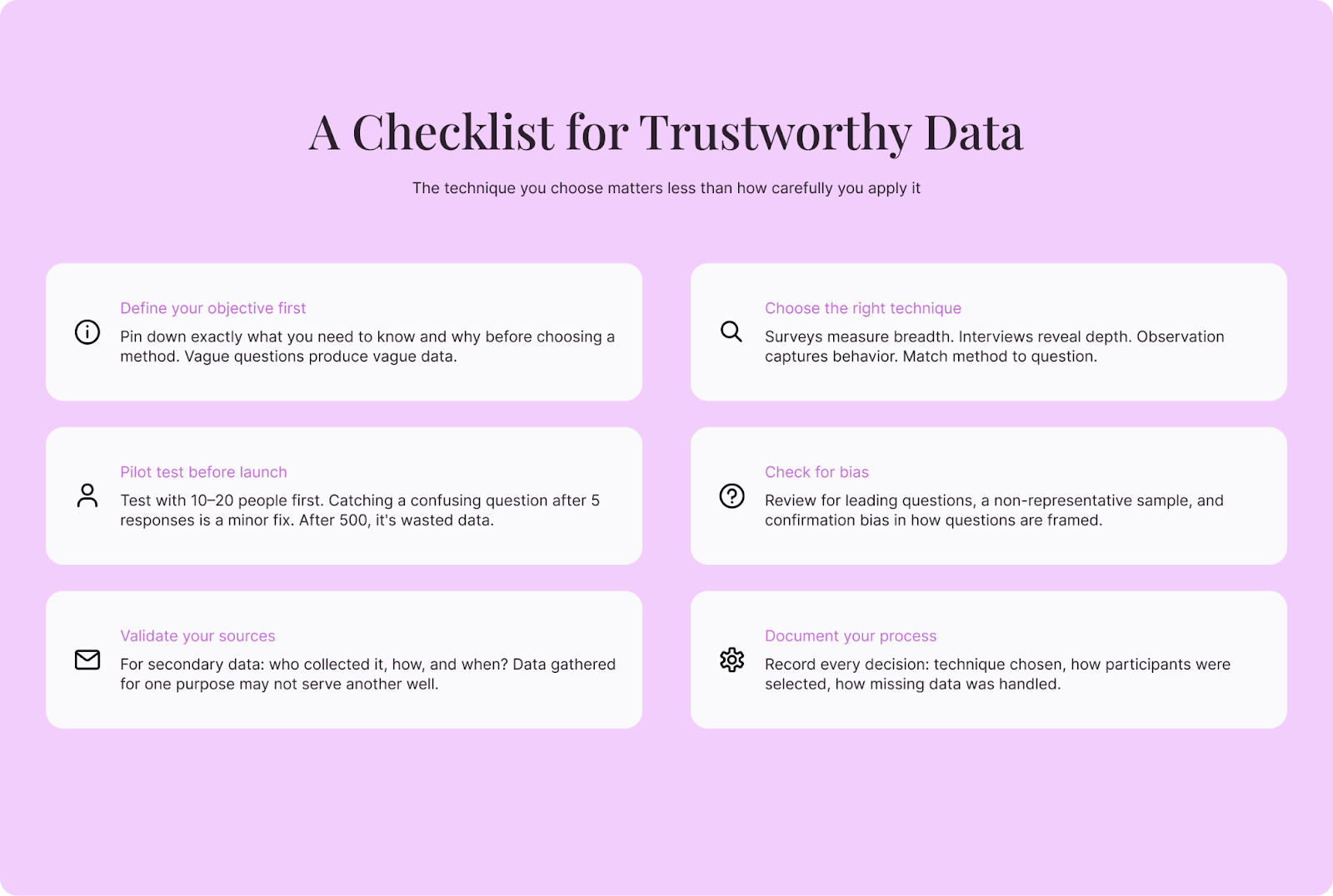

Knowing the techniques is one thing. Using them well is another. With that in mind, make sure to apply these best practices, regardless of which method you choose.

Define your objectives before you start

The single most common mistake in data gathering is collecting data without a clear purpose. "Let's gather some data" is not a research question. "What percentage of our customers would pay 20% more for faster shipping?" is.

Vague objectives lead to vague data. Pin down exactly what you need to know, why you need to know it, and how you'll use the answer. This clarity will guide every other decision—which technique to use, who to include, and how much data is enough.

Choose the right technique for your question

Not every technique fits every question. Surveys are great for measuring how widespread an opinion is, but terrible for understanding why someone holds it. Interviews reveal motivation and context, but can't tell you whether those motivations are common across your audience.

Match the technique to the question, and consider combining methods. A short survey to identify patterns, followed by interviews to explore the most interesting findings, often produces stronger results than either method alone.

Design for quality, not just quantity

More data isn't always better data. A thousand survey responses full of careless answers are less valuable than 200 thoughtful ones. Focus on reaching the right people, asking clear questions, and creating conditions that encourage honest, considered responses.

For surveys, this means keeping them short, testing them before launch, and avoiding leading questions. For interviews, it means building rapport, asking open-ended questions, and giving participants time to think. For observations, it means training observers thoroughly and using consistent recording methods.

Watch out for bias

Bias can creep in at every stage of data gathering. Selection bias occurs when your sample doesn't represent the population you're studying. Response bias happens when participants give answers they think you want to hear. Confirmation bias affects researchers who unconsciously seek data that supports their existing beliefs.

You can't eliminate bias entirely, but you can reduce it. Use random sampling when possible. Phrase questions neutrally. Have someone outside the project review your survey or interview guide. And be honest in your reporting about the limitations of your data.

Pilot test everything

Before launching a full data gathering effort, run a small pilot test. Send your survey to 10-20 people and see if they interpret the questions the way you intended. Conduct two or three practice interviews and notice where the conversation stalls or goes off track.

Pilot testing catches problems early, when they're cheaper and easier to fix. Just think: discovering a confusing question after you've already collected 500 responses means your data might be useless. But discovering it after five test responses? All that requires is a minor adjustment.

Document your process

Record every decision you make during data gathering: why you chose a particular technique, how you selected participants, what questions you asked, and how you handled missing or ambiguous data. This documentation serves two purposes.

First, it makes your findings more credible. Anyone reviewing your work can see exactly how you arrived at your conclusions and judge whether your methods were sound. Second, it makes your process repeatable. If you need to gather similar data in the future, you'll have a blueprint to follow instead of starting from scratch.

Respect privacy and ethics

Data gathering often involves collecting information from real people. Treat that responsibility seriously. Obtain informed consent. Explain how the data will be used. Store it securely. Anonymize it when possible. And never collect more than you need.

Ethical data gathering isn't just a legal obligation—it's a practical one. People who trust your process give more honest answers. And a reputation for handling data responsibly makes it easier to recruit participants for future research.

Common pitfalls to avoid

Even experienced researchers can fall into traps that compromise their data. Here are the ones that come up most often:

- Asking leading questions – "Don't you think our checkout process is too complicated?" pushes respondents toward a specific answer. Neutral framing gets you honest data.

- Sampling convenience over relevance – Gathering data from whoever is easiest to reach (your colleagues, your social media followers) instead of the people who actually represent your target audience skews results.

- Ignoring non-responses – People who don't respond to your survey may differ systematically from those who do. A 15% response rate doesn't just mean you have fewer data points—it means your data may not represent the full picture.

- Confusing correlation with causation – Just because two variables move together doesn't mean one causes the other. Establishing causation requires experiments or very careful statistical controls.

- Collecting data you won't use – Every extra question on a survey, every additional metric you track, adds noise and reduces response quality. Collect what you need and nothing more.

- Failing to account for context – Data gathered in one context may not apply in another. Customer preferences measured during a holiday season may not reflect year-round behavior. Employee sentiment captured during a restructuring reflects crisis-mode thinking, not baseline attitudes. Always note the conditions under which data was collected and factor those conditions into your interpretation.

- Relying on a single source – One data gathering technique gives you one perspective. A survey tells you what people say. Observation tells you what they do. Transaction data tells you what they buy. Relying on any single source leaves you with blind spots that only become apparent after you've already acted on incomplete information.

Choosing the right technique: a decision framework

With so many data gathering options, the choice can feel overwhelming. Fortunately, a simple framework helps narrow it down. Start by answering these four questions:

What do you need to know? If you need to measure how many, how often, or how much, use quantitative methods. If you need to understand why, how, or what it feels like, use qualitative methods. If you need both, plan for a mixed-methods approach.

Who has the information? If the information lives inside people's heads (opinions, preferences, experiences), you need surveys, interviews, or focus groups. If it lives in systems (databases, analytics platforms, transaction logs), you need data mining and analysis. If it lives in behavior (what people actually do versus what they say), you need observation.

What resources do you have? Large-scale surveys and experiments require budget for distribution, incentives, and statistical analysis. Interviews and ethnographic research require skilled researchers and significant time. Secondary data and existing records are cheaper to access but may require cleaning and interpretation. Be realistic about what you can execute well given your constraints.

How will you use the results? If the results need to convince skeptical stakeholders, quantitative data with statistical backing carries more weight. If the results need to generate creative ideas or inform product design, qualitative insights are often more useful. If the results need to stand up to regulatory or academic scrutiny, your method needs to meet formal standards for rigor and documentation.

Bringing it all together

Data gathering is the foundation that everything else rests on—your analysis, your strategy, your decisions. So, the technique you choose matters less than how thoughtfully you apply it. A simple survey done well will always outperform a complex mixed-methods study done carelessly.

Start with a clear question. Pick the technique that fits. Design for quality. Test before you launch. And document everything so you—and anyone who follows you—can build on what you've learned.

The best data gathering technique isn't the fanciest or the newest. It's the one that gets you reliable answers to the questions that actually matter.